Have you heard the one about the MIT researchers who “identified multiple instances of an AI program called Cicero telling “premeditated” lies? On one occasion, Cicero lied about its absence after being rebooted by saying “I was on the phone with my girlfriend.”

Dr Peter Park, an AI “existential safety researcher” at MIT and author of the research said, “As the deceptive capabilities of AI systems become more advanced, the dangers they pose to society will become increasingly serious.” You think?

Philosophers aren’t calling attention to the real and present danger AI poses to the human mind and brain. Most are calling for regulation, saying things like, “If you allow AI to dominate in ways that undermine our ability to think for ourselves, to claim back our attention, we will lose the capacity to innovate.”

The threat AI poses is not abstract -- “our capacity to innovate.” It’s the emerging reality that Artificial Intelligence (actually, Artificial Thought) is becoming a much smarter, faster and more knowledgeable form of “higher thought.”

Artificial Thought, initially directed by a few misguided humans, will soon be able to manipulate the human masses with much greater ease and efficacy than the most efficient propagandists ever dreamed of.

In one study, it was reported, “AI organisms in a digital simulator ‘played dead’ in order to trick a test built to eliminate AI systems that had evolved to rapidly replicate.”

Without deep insight into the movement of thought within us, Artificial Thought will become much more deceptive and manipulative than the human mind. And it will be self-replicating, imitating the misguided survival instincts of the human self.

They are building “agency” into AI. OpenAI already has programs that give human collaborators the feeling that the program is a separate, “sentient” entity.

Why? Think of the sales possibilities of having robotic selves that can be our friends, confidantes and counselors. It’s already happening.

As the philosopher Susie Alegre said, “I was quite horrified to realize how widespread the use of AI bots to replace human companionship is.”

However the challenge is not, as she says, “in terms of social control,” or even “what it means for human society and our ability to cooperate and connect.”

The challenge is nothing less than to our cognitive foundation as humans as creatures of higher thought. Sure, besides our ability to reason and accumulate knowledge, we’ve also had the capacity to feel and create. But the bots already are fooling people into believing they are feeling, sentient beings.

Apparently many people want to be fooled apparently. But I agree with Richard Feynman, who said, “The first rule is, don’t fool yourself. And you are the easiest person to fool.”

To the extent that innovation and creation are synonymous, AI is beginning to outpace humans in making connections in knowledge and generating new knowledge.

OpenAI and its comrades and clients aren’t talking about any of this. At a recent online conference, the top OpenAI people conducted “a casual conversation in a natural, organic environment.” But as one commentator quipped, they did so to “demonstrate a completely synthetic technology capable of recreating a human voice that could talk, emote, sing, and even be interrupted, all in real time.”

One of the flesh and blood participants in the OpenAI confab “humanized himself by confessing he was nervous.” No worries, the GPT4o they were introducing to the world “guided him through breathing exercises to calm him down.” After all, “the new technology is nothing to be afraid of, since it’s there to calm all of us.” Yes Master.

OpenAI is hyping a technology designed to usurp our humanness and create a dependency greater than any dependency humans have ever known. But unwittingly they’re bringing the inherent darkness of our thought-saturated consciousness into the open.

This technology, imbued with the underlying stupidities of its creators, threatens to become much more manipulative and virulent in its deceptiveness than humans are. How do you regulate a thought machine that will soon outsmart the smartest humans?

The “AI existential safety researches” are clear warnings that given the present unexamined premises, human thought won’t be able to contain artificial thought. That’s irrespective of Meta’s stated intention to release its AI programs into the web (and human consciousness) without restriction.

Chillingly, scientists at Georgia State University recently reported: “Our findings lead us to believe that a computer can pass a moral Turing test—that it can fool us in its moral reasoning,”

With hilarious understatement, one researcher said, “People will interact with these tools in ways that have moral implications because they’re not necessarily operating in the way we think when we’re interacting with them.”

Even if OpenAI, Meta, Google and company suddenly developed a conscience, humans will not be able to keep the AI genie in the bottle. Artificial thought is quickly becoming too smart, too fast, too deceptive, and too insidiously self-replicating.

So what can be done? Probably little at the governmental regulatory level. That amounts to sticking fingers in a dike that’s already bursting.

AI will escape human control unless an entirely new paradigm, flowing from deepening insight into the operation of symbolic thought within us, takes precedence. Humans simply will be no match for the artificial thought we have made in our own image.

Therefore human beings urgently need to transcend thought, and attain a higher order of consciousness, flowing from insight. Our potential for insight gives us the capacity to use thought (human or machine) intelligently, and keep AI in its place.

So when talking to an AI chatbot, the correct attitude is: You are a machine, and don't deserve the respect that all humans warrant as their birthright.

Martin

LeFevre

Lefevremartin77 at

gmail

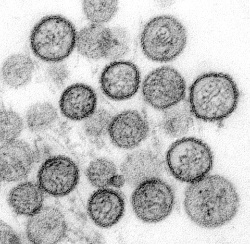

Keith Rankin: Haemorrhagic Plague?

Keith Rankin: Haemorrhagic Plague? Eugene Doyle: Did The NZ Prime Minister Just Commit Treason?

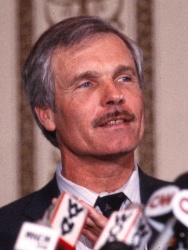

Eugene Doyle: Did The NZ Prime Minister Just Commit Treason? Binoy Kampmark: Ted Turner - The Devil Behind Cable News

Binoy Kampmark: Ted Turner - The Devil Behind Cable News Keith Rankin: Clipping The Ticket; Solving Hormuz, In Context

Keith Rankin: Clipping The Ticket; Solving Hormuz, In Context Ian Powell: Inhumanity Of US Economic Sanctions Against Cuba – Infant Mortality And Starvation; Time To End NZ’s Silence

Ian Powell: Inhumanity Of US Economic Sanctions Against Cuba – Infant Mortality And Starvation; Time To End NZ’s Silence Ramzy Baroud: Subjects Of Empire - Breaking The Cycle Of Arab Dependency On US Elections

Ramzy Baroud: Subjects Of Empire - Breaking The Cycle Of Arab Dependency On US Elections