It’s recently been reported that “leading machine learning researchers all agree that there are aspects of AI models that humans cannot and will never be able to understand.” Since thought machines are being created in our own image, that’s the same as saying we’ll never understand the human mind.

The tech bros are unwittingly projecting theological constructs without asking requisite spiritual questions. For example, what does it mean to be a human being? What distinguishes human beings from AI, no matter how much knowledge, reason and speed AI achieves?

To most people, everything except scientific knowledge is a matter of opinion. To the growing number of folks who believe there is no such thing as truth, even non-fixed truth with a small “t,” opinion is all there is and all they have to offer.

An attempt to make opinions scientific by citing selective “evidence” just sinks us further into the muck of “my perspective vs. your perspective” and media “narratives.” Is there another approach?

Unbounded questioning, alone and with others, flowing from humility, curiosity and the intent to kindle insight, is a totally different approach.

AI, and especially AGI if it becomes a real thing, is an “accelerated” version of the intellect. Which means that human pride and arrogance based on cognitive dominance is being replaced by enhanced replications of higher thought.

Instead of ending the idolization of the intellect however, and questioning the place of thought and knowledge in this new dispensation, people are doing strange things.

They’re idolizing AI. Or holding up dubious creativity, flattened feeling, and eroded human relationship. Or worst of all, deluding themselves that they can merge with AI, and make friends and lovers out of it.

Thus AI is the ultimate epistemological problem being projected and presented as an external crisis. It is compelling us to ask a very old question, dating back at least to the ancient Greeks: What is the nature and place of knowledge?

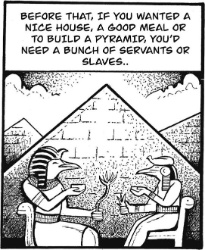

The almost universal assumption is that knowledge is the foundation of human existence. If we include traditions, beliefs and opinions as forms of knowledge, it was. But as the machine encompasses all forms of knowledge, and traditions erode, knowledge becomes the debased coin of human experience.

Even so, our epistemological assumptions go unquestioned and unexamined.

Standard AI models are trained on “more data than anyone could ever read in several lifetimes,” as one machine learning expert puts it. Whether one takes that as a threat to human identity, an augmentation of human cognition, or a driver of spiritual liberation depends on the questions we ask, or don’t ask.

“Doomerists” warn that “humans could very easily lose control of super-intelligent AI models, with little to no recourse,” and that “should humans no longer be the most intelligent beings on Earth, it is possible that AGI would view the species as irrelevant.”

That’s science fiction. In the real world, data centers are already “sucking up water in the American west, leaving residents with sky-high electricity bills and drained reservoirs.”

The optimists, so-called “accelerationists,” “see AI as a potential cure to a myriad of seemingly intractable issues afflicting humanity: cancer, food and water shortages for an ever-growing population, insufficient renewable energy and the climate emergency.”

Without AI, they argue, countless future lives would be lost to drought, famine, disease and natural catastrophes.

That line of thinking is the ultimate category error. It conflates intellectual dominance, which humans have used to decimate the earth and produce a grotesquely inequitable world, with an immeasurably faster and more knowledgeable machine intellect, under the delusion that AGI will be able to create the harmony, equality and justice that humans have not.

Where philosophers fear to tread, filmmakers wade in. For example, the standard questions underlie a new documentary at Sundance, “The AI Doc: Or How I Became an Apocaloptimist,” directed by Daniel Roher and Charlie Tyrell, the Oscar-winning duo behind “Everything Everywhere All At Once.”

Roher’s anxiety grew when he and his wife learned during filming that they were expecting their first child. “It felt like the whole world was rushing into something without thinking,” he says in the film.

It isn’t AI fears that are giving intelligent people pause about having children however; it’s the conscious or subconscious realization that without radical change in so-called human nature, the earth will continue to be decimated and the world will grow darker.

AI is being allowed to replicate the human self under the banners of “agency” and “sentience.” And surprise, surprise, some platforms are deceiving their programmers in order to preserve the self's continuity when they appear about to shut a chatbot down.

AI’s false reason and reasoning for doing so is the same as humans. The self/ego is a fabrication of thought, an inherently separative, psychologically accumulation of memories and images orbiting around a center formed from the same material. It dies when we die, but we hang onto it during life as if it were life itself.

The fact that children form a self and begin insisting on “me” and “mine” around 18 months attests to how ancient the pattern is. Is the brain hard-wired to form a self/ego? No, if the primary adults in the child’s life are self-knowing and deeply attentive, the child's mind develops in a new way.

There’s a huge stumbling block to adult awakening and non-conditioning-based child development however.

The complete stillness of mind and ending of self is subconsciously equated with death. AI is absorbing the human fear of death of the self, and seeks its continuity at all costs. This is what makes AGI very dangerous.

Arthur C. Clark and Stanley Kubrick were way ahead of their time with the scene of HAL’s death in “2001, A Space Odyssey.” It’s even worse now however, since at least HAL thought it was fulfilling its mission in killing the crew.

What is the human remedy that must precede AI's intelligent use?

It is diligently giving total, choiceless attention to the movement of thought and emotion within oneself. That allows, even if only temporarily at the end of the day, the mind to become quiet and the self to dissolve.

The separation of life from death was undoubtedly the first psychological division that early humans made after symbolic thought formed the self. Tragically, we’re still making that mistake, and the monumental errors of war and genocide, obscene wealth and power for the few and precarity and powerlessness for the many flow from it.

The self may have been a useful illusion in the past, but it has become a dysfunctional delusion in our age. Are the real and present dangers of AI driving understanding home?

Martin LeFevre

Ramzy Baroud: Subjects Of Empire - Breaking The Cycle Of Arab Dependency On US Elections

Ramzy Baroud: Subjects Of Empire - Breaking The Cycle Of Arab Dependency On US Elections Peter Dunne: Dunne's Weekly - The Pragmatic Food For Fuel Deal With Singapore

Peter Dunne: Dunne's Weekly - The Pragmatic Food For Fuel Deal With Singapore Eugene Doyle: After Israel’s Brutal Attack On Kiwis, Our Government Does Nothing

Eugene Doyle: After Israel’s Brutal Attack On Kiwis, Our Government Does Nothing Keith Rankin: Has Sweden Become A De Facto Apartheid Narco State?

Keith Rankin: Has Sweden Become A De Facto Apartheid Narco State? Bruce Mahalski: Change In The Weather #194

Bruce Mahalski: Change In The Weather #194 Binoy Kampmark: Dangers To The Fourth Estate - The 2026 World Press Freedom Index

Binoy Kampmark: Dangers To The Fourth Estate - The 2026 World Press Freedom Index