Protecting Facebook Live from Abuse, Investing Research

May 14, 2019

Protecting Facebook Live from Abuse and Investing in Manipulated Media Research

By Guy Rosen, VP Integrity

Following the horrific terrorist attacks in New Zealand, we’ve been reviewing what more we can do to limit our services from being used to cause harm or spread hate. As a direct result, starting today, people who have broken certain rules on Facebook — including our Dangerous Organizations and Individuals policy — will be restricted from using Facebook Live.

Tackling these threats also requires technical innovation to stay ahead of the type of adversarial media manipulation we saw after Christchurch when some people modified the video to avoid detection in order to repost it after it had been taken down. This will require research driven across industry and academia. To that end, we’re also investing $7.5 million in new research partnerships with leading academics from three universities, designed to improve image and video analysis technology.

Restrictions to Live

The overwhelming majority of people use Facebook Live for positive purposes, like sharing a moment with friends or raising awareness for a cause they care about. Still, Live can be abused and we want to take steps to limit that abuse.

Before today, if someone posted content that violated our Community Standards — on Live or elsewhere — we took down their post. If they kept posting violating content we blocked them from using Facebook for a certain period of time, which also removed their ability to broadcast Live. And in some cases, we banned them from our services altogether, either because of repeated low-level violations, or, in rare cases, because of a single egregious violation (for instance, using terror propaganda in a profile picture or sharing images of child exploitation).

Today we are tightening the rules that apply specifically to Live. We will now apply a ‘one strike’ policy to Live in connection with a broader range of offenses. From now on, anyone who violates our most serious policies will be restricted from using Live for set periods of time – for example 30 days – starting on their first offense. For instance, someone who shares a link to a statement from a terrorist group with no context will now be immediately blocked from using Live for a set period of time.

We plan on extending these restrictions to other areas over the coming weeks, beginning with preventing those same people from creating ads on Facebook.

We recognize the tension between people who would prefer unfettered access to our services and the restrictions needed to keep people safe on Facebook. Our goal is to minimize risk of abuse on Live while enabling people to use Live in a positive way every day.

Investing in Research into Manipulated Media

One of the challenges we faced in the days after the Christchurch attack was a proliferation of many different variants of the video of the attack. People — not always intentionally — shared edited versions of the video, which made it hard for our systems to detect.

Although we deployed a number of techniques to eventually find these variants, including video and audio matching technology, we realized that this is an area where we need to invest in further research.

That’s why we’re partnering with The University of Maryland, Cornell University and The University of California, Berkeley to research new techniques to:

• Detect manipulated media across images, video and audio, and

• Distinguish between unwitting posters and adversaries who intentionally manipulate videos and photographs.

Dealing with the rise of manipulated media will require deep research and collaboration between industry and academia — we need everyone working together to tackle this challenge. These partnerships are only one piece of our efforts to partner with academics and our colleagues across industry — in the months to come, we will partner more so we can all move as quickly as possible to innovate in the face of this threat.

This work will be critical for our broader efforts against manipulated media, including deepfakes (videos intentionally manipulated to depict events that never occurred). We hope it will also help us to more effectively fight organized bad actors who try to outwit our systems as we saw happen after the Christchurch attack.

These are complex issues and our adversaries continue to change tactics. We know that it is only by remaining vigilant and working with experts, other companies, governments and civil society around the world that we will be able to keep people safe. We look forward to continuing our work together.

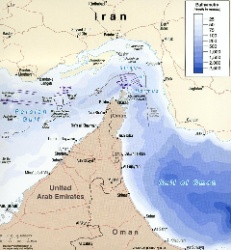

UN News: Uncertainty Continues Over Safety In The Strait Of Hormuz

UN News: Uncertainty Continues Over Safety In The Strait Of Hormuz Australian Museum: Celebrate Sir David Attenborough's 100th Birthday With The Australian Museum

Australian Museum: Celebrate Sir David Attenborough's 100th Birthday With The Australian Museum Clean Shipping Coalition: Shipping - IMO’s Net Zero Framework Progresses But ENGOs Slam Unnecessary Delay

Clean Shipping Coalition: Shipping - IMO’s Net Zero Framework Progresses But ENGOs Slam Unnecessary Delay Gena Wolfrath, IMI: Understanding News Fatigue—and How To Stay Informed Without Overload

Gena Wolfrath, IMI: Understanding News Fatigue—and How To Stay Informed Without Overload Access Now: A Statement To Our Community About Why RightsCon 2026 Will Not Take Place In Zambia

Access Now: A Statement To Our Community About Why RightsCon 2026 Will Not Take Place In Zambia Climate Action Network: Santa Marta Plants The Seeds Of A Fossil-Free Future - Civil Society Will Hold Governments To Account

Climate Action Network: Santa Marta Plants The Seeds Of A Fossil-Free Future - Civil Society Will Hold Governments To Account