Lyndon Hood: The Social Investment Model, Explained

The Social Investment Model, Explained

By Lyndon HoodFirst published on Werewolf

Hello everyone, please be seated.

As Chairman-at-large of the National Committee for Ideas that Sounded Good at the Time, I get asked a lot about this social investment strategy lark. It looks to be a big feature of the upcoming budget and they reckon it’s a revolutionary approach to managing the fiscal ramifications of population-based wellbeing enhancement, so let’s give it squizz. Listen carefully and all should become clear.

Except for you in seat 7F there – our algorithms have predicted this will all go completely over your head, so how about you just grab that colouring-in book and try not to distract the others.

Now first you have to understand this government has been making great strides in the field of evidence-based policy making. For example one line of research is to discover how much evidence a minister of the crown can ignore when making policy. So far we know it’s “a lot”. There’s a hypothesis that your typical minister can ignore more information than is presently contained in the universe. It’s a tricky one to prove, but we’re working on it and I can’t say it’s wrong as of press time.

Second, some of you might not know the term ‘big data’. Well I’ve looked into this for you and it turns out big data is a way of making computers a bit racist. It’s also the reason Facebook seems to think I live in a different city than I in fact do. But big data has proved useful in many commercial fields. For example, analytics companies can now determine with terrifying accuracy which governments they can make the most money selling ‘big data’ engines to.

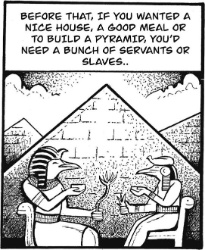

For too long social welfare has muddled along with bipartisan policies like shouting at the jobless or not helping people with mental health issues, without scientifically confirming if those methods work. So now we have ‘social investment’, which is a way of explaining that your welfare policy is good, actually, because of numbers. The idea, you see, is you’re making welfare interventions based on their actual measured outcomes. It turns out people have looked into this and often the best way to get good outcomes is to just give everyone more money. So this social investment idea is something the government’s come up with to avoid doing that.

Now, if you want to compare the outcomes of things, you have to turn them into something you can count. Let’s suppose you want to know how your programme of hitting beneficiaries with sticks affects their personal wellbeing, helps them fulfil their potential, and benefits wider society. In that case, personal wellbeing would be measured in terms of how much the individual costs the government. Fulfilled potential, on the other hand, might be measured in how much the individual costs the government. The benefits to wider society is obviously trickier to work out, but one popular metric is how much the individual costs the government. (If you’re wondering how all this might affect you, I recommend getting a regular medical checkup from your accountant.)

A simplified version of the process might pan out as follows: Take your beneficiary or your patient or your newborn child or whatnot, enter its vital statistics, perform a regression based on anomalies observed in the original sample, subtract the difference between the cradle and the grave and multiply by the sum of human dignity. Wait a moment, that's wrong. Dignity hasn't been a factor for years. Anyway, once you're done maximizing your divisions, sweep the remainder up into a small pile, and then offer them some bootstraps to pull themselves up by or whatever else the system recommends.

Another issue is, if you’re working from data you have to be quite careful to use it properly. Making sure to take all the information into account for your conclusions, not picking and choosing, and so on. Otherwise you might get perverse consequences (and not the good kind). As it happens this government’s been practising this for a while. For example, they worked out people who were on the benefit were at greater risk than people who weren’t, so they kicked everyone off the benefit. Which makes perfect sense if you don’t think about it too hard. And in Corrections, where they can clearly trace the statistical effectiveness of every intervention they apply, reoffending rates are going back up again for some reason they apparently can’t put their finger on. So, third time being the charm, if they have another go at this data stuff now, I reckon they’ll finally get the hang of it.

Any questions? Well, there is a risk of stigmatising people for life based on a statistical guess, but I think you can trust us to avoid that. Any other questions? Not you, 7F, stop wasting everyone’s time pretending you’ve got a contribution to make.

Good. There will now be a short test. I’ve written the answers on the whiteboard here so I don’t miss out on my performance bonus.

Eugene Doyle: After Israel’s Brutal Attack On Kiwis, Our Government Does Nothing

Eugene Doyle: After Israel’s Brutal Attack On Kiwis, Our Government Does Nothing Keith Rankin: Has Sweden Become A De Facto Apartheid Narco State?

Keith Rankin: Has Sweden Become A De Facto Apartheid Narco State? Bruce Mahalski: Change In The Weather #194

Bruce Mahalski: Change In The Weather #194 Binoy Kampmark: Dangers To The Fourth Estate - The 2026 World Press Freedom Index

Binoy Kampmark: Dangers To The Fourth Estate - The 2026 World Press Freedom Index Richard S. Ehrlich: Strait Of Hormuz Blockades & Thailand's Land Bridge

Richard S. Ehrlich: Strait Of Hormuz Blockades & Thailand's Land Bridge Keith Rankin: 'I Am A Semite'

Keith Rankin: 'I Am A Semite'