The EU Needs An Artificial Intelligence Act That Protects Fundamental Rights

Access Now and over 110 civil society organisations have laid out proposals to make sure the European Union’s Artificial Intelligence Act addresses the real-world impacts of the use of artificial intelligence (AI), places fundamental rights protection front-and-centre, and maintains a broad definition of AI systems.

“Access Now’s priority is not to have an EU law on AI, but to have one that is an effective instrument to protect people’s rights,” said Fanny Hidvégi, Europe Policy Manager at Access Now. “We’ve laid out the steps needed to boost the proposed regulation’s human rights standards, and are looking forward to working with the Council and Parliament to guarantee they are achieved.”

Access Now and AlgorithmWatch rang the alarm bell last week when information leaked suggesting the European Council is planning on drastically narrowing the definition of AI systems, potentially excluding many technologies that impact human rights. The AI Act needs to be amended, but those amendments must increase protections for fundamental rights, not water them down.

“The European Union has a unique chance to set the global standard for AI regulation, just like it did with the General Data Protection Regulation (GDPR) which is now seen as the golden standard,” said Daniel Leufer, Europe Policy Analyst at Access Now. “With this joint statement, civil society has laid out clear guidelines on how to make the AI Act a world-leading piece of legislation, and we urge lawmakers to take these recommendations on board.”

Access Now and a coalition of civil society organisations call on the Council of the European Union, the European Parliament, and all EU member state governments to ensure that the Artificial Intelligence Act achieves a series of goals, including:

- Create a cohesive, future-proof approach to "risk" of AI systems by adding a mechanism to update all risk categories in the Act, including prohibitions;

- Extend and improve Article 5’s list of prohibitions to cover the full scope of AI systems posing an unacceptable risk to fundamental rights;

- Add obligations on users of high-risk AI systems to facilitate accountability to those impacted by AI systems;

- Ensure consistent and meaningful transparency to the public; and

- Add individual rights to ensure meaningful redress for people affected by AI systems.

Read the full list of goals.

IPMSDL: Condemn The Killing Of Children, Bombing In Manipur, And Violent Repression Of People’s Protests

IPMSDL: Condemn The Killing Of Children, Bombing In Manipur, And Violent Repression Of People’s Protests Médecins Sans Frontières: Three Years On, Outbreaks Everywhere - MSF Urges Boost To Sudan’s Vaccination Programs

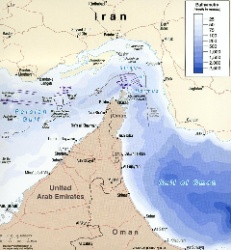

Médecins Sans Frontières: Three Years On, Outbreaks Everywhere - MSF Urges Boost To Sudan’s Vaccination Programs UN News: Uncertainty Continues Over Safety In The Strait Of Hormuz

UN News: Uncertainty Continues Over Safety In The Strait Of Hormuz Australian Museum: Celebrate Sir David Attenborough's 100th Birthday With The Australian Museum

Australian Museum: Celebrate Sir David Attenborough's 100th Birthday With The Australian Museum Clean Shipping Coalition: Shipping - IMO’s Net Zero Framework Progresses But ENGOs Slam Unnecessary Delay

Clean Shipping Coalition: Shipping - IMO’s Net Zero Framework Progresses But ENGOs Slam Unnecessary Delay Gena Wolfrath, IMI: Understanding News Fatigue—and How To Stay Informed Without Overload

Gena Wolfrath, IMI: Understanding News Fatigue—and How To Stay Informed Without Overload